Personal AI Supercomputer Based on NVIDIA DGX Spark Platform for Deep Learning

Accelerated computing is increasingly becoming the foundation of modern IT systems – especially where requirements related to AI, data analysis and image processing are growing. In practice, this means the need to choose not only the right GPU, but an entire hardware architecture that will ensure performance, stability and the ability to scale.

In this article, we explain accelerated computing from a practical, hardware point of view and show what types of GPU computers and AI servers are used today and how to match them to real workloads.

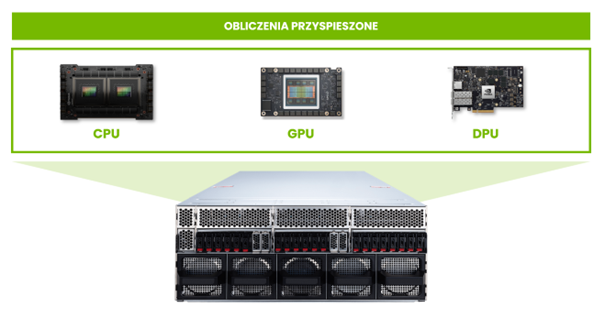

Accelerated computing is an approach to data processing in which the most computationally demanding tasks are handled by specialized accelerators – primarily GPUs, and increasingly also DPUs – instead of solely by a classic CPU processor. From a hardware perspective, this means systems designed with acceleration in mind: with the appropriate PCIe architecture, efficient power supply and cooling, and the ability to stably operate GPU cards with high TDP.

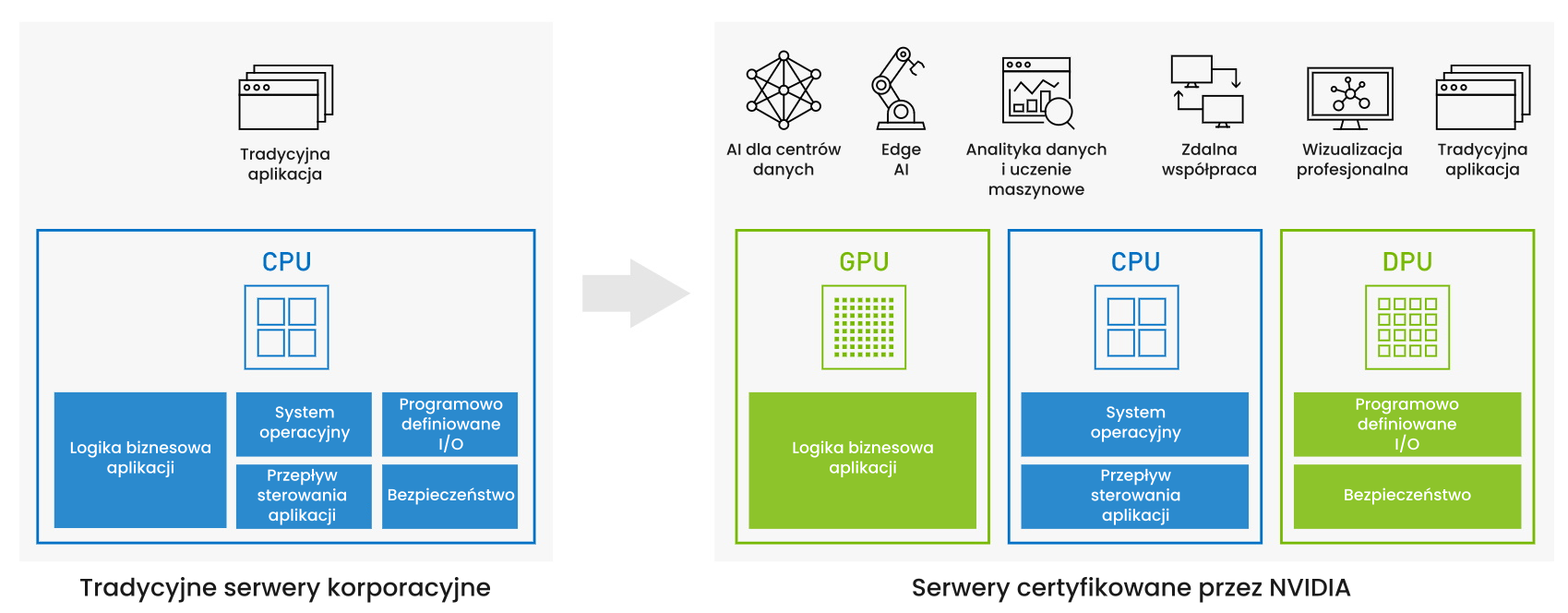

Classic CPU servers were designed for years with sequential workloads and general business applications in mind. However, with the development of AI, data analysis and image processing, more and more tasks have a strongly parallel character – the same operations are performed simultaneously on huge volumes of data.

Attempting to carry out such workloads solely on a CPU leads to low energy efficiency, scaling problems and unpredictable performance. Accelerated computing addresses these limitations by shifting the most intensive computations to an architecture designed specifically for parallel work, where GPUs and other accelerators take on the key computational role.

Computers for accelerated computing are based on a heterogeneous architecture in which the CPU, GPU and other accelerators cooperate as equivalent elements of the system. Proper selection of this architecture directly affects the performance, stability and cost of the entire solution. The CPU is responsible for control logic and coordination, while the GPUs handle the most computationally intensive fragments of applications.

The most commonly used accelerators today are GPU cards, which, thanks to thousands of computing cores, perform excellently in AI systems, image analysis, simulations and data analytics.

The accompanying infrastructure is equally important: fast memory, PCIe 4.0 and 5.0 buses, NVMe drives, and efficient and stable cooling systems. In enterprise environments, DPUs are also playing an increasingly important role, accelerating network processing, security and data handling.

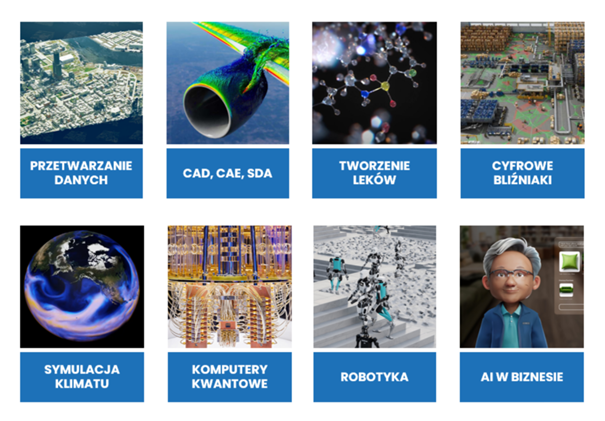

Accelerated computing is used wherever the scale of data, the complexity of computations or time requirements are growing.

In practice, this includes, among others:

The common denominator of these areas is the need to perform a huge number of similar operations in parallel – exactly where the accelerated computing architecture brings the greatest benefits.

See what GPU computers are used in AI systems, video analysis and simulations.

Selecting hardware for accelerated computing does not come down solely to choosing a GPU card. The following factors are of key importance:

Properly matching these elements allows you to build a system that will be not only efficient, but also stable and cost-effective throughout its life cycle.

Consult the selection of a GPU computer for your project.

Depending on the nature of the project, accelerated computing takes different hardware forms:

GPU workstations – used in design, simulations, data analysis and the work of R&D and data science teams.

Discover our computers based on NVIDIA RTX.

Computers for AI inference – systems designed to run ready-made models in continuous mode, often close to the data source, e.g. in video analysis, automation or robotics.

DGX Spark / MSI EdgeXpert – a desktop AI supercomputer for technical teams

GPU servers and computing platforms – solutions for enterprise and data center environments, where scalability, computing power density and integration with existing IT infrastructure are key.

Modular NVIDIA MGX server platforms.

At Elmatic, we treat accelerated computing as a concrete hardware architecture, not an abstract concept. We help clients translate computational requirements into real configurations of GPU computers, workstations and AI servers that can be safely deployed and developed over time.

Our competencies include:

We deliver both ready-made configurations and solutions designed for specific scenarios – from single workstations to extensive computing infrastructure based on NVIDIA RTX and MGX platforms.

The greatest value of accelerated computing comes when it is an element of a well-thought-out architecture, not an add-on to an existing system. That is why at Elmatic we combine hardware knowledge, familiarity with NVIDIA platforms and practical implementation experience, designing systems tailored to real workloads – from edge to data center.

Talk to an Elmatic engineer about selecting a GPU computer or AI server.