Edge computing vs. cloud – where should data be analyzed in industrial systems?

In modern IT systems – both industrial and enterprise – data is created ever closer to the source: in sensors, PLC controllers, vision cameras, security systems, IoT devices, as well as in business applications and IT infrastructure. Along with this, the key design question is shifting – where should this data actually be analyzed to make the system fast, stable and cost-effective to maintain.

Not all data has the same value over time – especially in industrial systems, automation and AI-based solutions. Some of it requires reaction within milliseconds, while other data only gains meaning after aggregation from many hours, days or locations. That is why the decision on where to process data directly affects:

- system response time,

- transmission and infrastructure costs,

- resilience to network failures,

- security and control over data.

Instead of treating edge computing and the cloud as competing approaches, architectures are increasingly being designed in which both worlds play clearly defined roles. In practice, however, it is local processing that serves as the starting point for most stable and scalable systems. Below we show where each of them has a practical justification – with examples encountered in industry and IT.

In practice, the question is rarely "edge or cloud".

Much more often it is about how much processing can be carried out locally before we reach for the central layer.

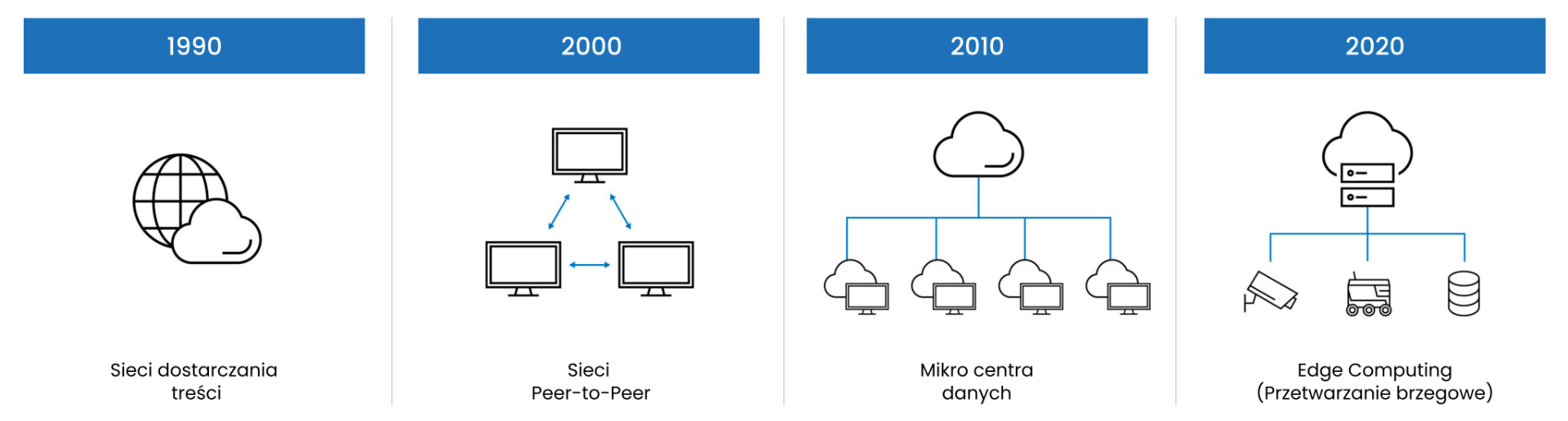

History of Edge Computing

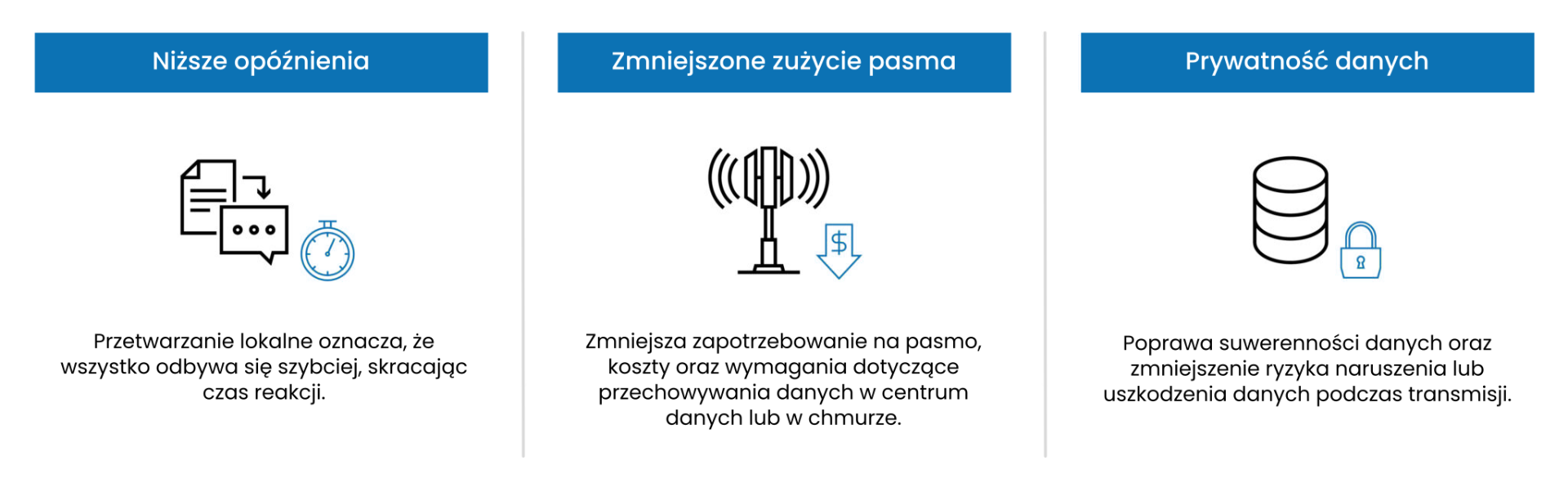

Lower latency, smaller bandwidth requirements, data privacy, higher efficiency

Edge computing (edge computing) involves analyzing data directly at the place where it is created – at the level of the machine, production line, vehicle or local computing node. The key advantage of this approach is minimizing latency and making critical functions independent of the cloud connection.

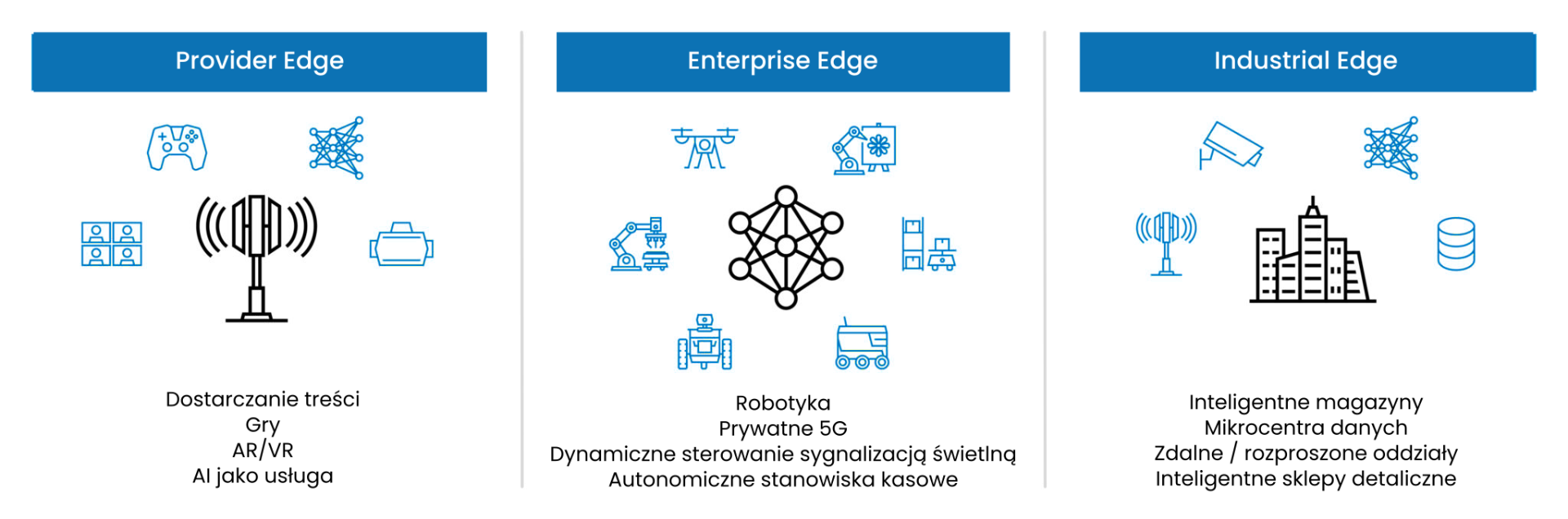

In practice, edge computing is used, among others, in most modern industrial deployments and increasingly often in enterprise environments, particularly in:

- vision-based quality control systems, where the decision to reject a part must be made immediately,

- local video analysis and analysis of data from security cameras in office buildings, campuses and logistics centers,

- image and video analysis (e.g. detection of events, objects, anomalies),

- real-time control and automation systems,

- monitoring of machines and critical infrastructure,

- analysis of operational data in data centers, server rooms and distributed company branches.

Instead of sending full data streams – e.g. high-resolution video or raw sensor signals – analysis takes place locally, and only the results are forwarded to higher-level systems. It is precisely this model that most often turns out to be the most cost- and operationally effective: alarms, metadata, statistics or selected data samples. This reduces network traffic and allows the system to scale without a sharp increase in transmission costs.

An important aspect is also resilience to connectivity problems. In industrial, warehouse or mobile environments, access to stable internet is not always guaranteed, and the system must operate in continuous mode. Edge computing allows the process to be maintained continuously even in the event of a temporary loss of connection with the cloud.

Cloud – when scale and a holistic view are needed

Cloud-based data processing is used where the key factor is centralization of information and large computational scale, and response time is not critical – both in industry and in classic enterprise environments. Unlike edge computing, the cloud enables the collection of data from multiple locations and its analysis in a single, coherent environment.

Typical cloud applications cover both industrial systems and IT and enterprise solutions:

- training and validation of AI models,

- analysis of historical data and long-term trends,

- reporting, dashboards and business analytics,

- comparing process performance between plants or production lines.

Thanks to flexible scaling of computing power, the cloud makes it possible to carry out tasks that would be difficult to maintain locally – especially when the load varies over time. At the same time, limitations must be taken into account: delays resulting from data transmission, transfer costs, and dependence on a stable network infrastructure.

For this reason, the cloud works best as a central layer of the system, complementing local processing. In practice, it is rarely the first and main place for the analysis of operational data.

Edge or cloud – a design decision, not an ideological one

In real deployments, systems based solely on the edge or solely on the cloud are rare. What is key is matching the place of processing to the nature of the data and process requirements.

If the system has to react in real time, operate autonomously or process sensitive data – the natural and most often recommended choice is edge computing. If, on the other hand, the goal is to analyze large data sets, train models or aggregate information from multiple locations – the cloud gains the advantage.

That is why in industrial, business and enterprise environments, the dominant approach today is a hybrid architecture, in which local processing plays the primary role and the cloud a supporting one. Data is analyzed locally where speed and context matter, and then selected results or historical data are sent to the cloud for further processing. This approach makes it possible to combine the advantages of both worlds without duplicating their limitations.

Edge computing in enterprise environments – why on-prem is coming back

Although the cloud has been the natural direction for IT infrastructure development for years, more and more enterprise organizations are once again investing in local data processing. The reason is not technological backtracking, but the maturity of projects and real-world experience with the costs and limitations of cloud-only approaches.

In enterprise environments, edge computing is used, among others, in:

- local video analysis (security, monitoring, retail, logistics),

- IT systems requiring low latency and predictable performance,

- processing of sensitive data that should not leave the organization's infrastructure,

- distributed branches and campuses, where centralization in the cloud generates delays and costs.

An increasingly common model is local operational processing (on-prem / edge) combined with the cloud as an analytical, reporting or training layer. Such architecture provides greater control over data, cost stability and predictability of system operation.

Architecture vs. hardware – how to select computing platforms

In edge–cloud architectures, it is the type of data processing, rather than technological fashion, that should determine the choice of hardware. In most cases, this means starting the project from the local layer. Not every edge solution requires GPU acceleration – in many cases, energy-efficient and reliable devices performing data collection, initial filtering and communication are sufficient.

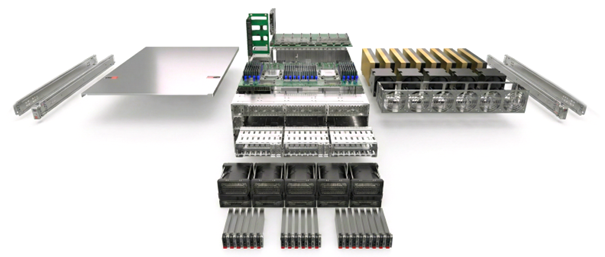

At the lowest level of the edge architecture, measurement and communication modules often operate, such as the ADAM series, as well as Mini PCs, Box PCs or Panel PCs. These are devices designed for continuous operation, with a long life cycle, industrial interfaces and the ability to be mounted directly on the machine or in a control cabinet.

They are responsible for integration with sensors and machines, preliminary data processing and communication with higher-level systems.

In more demanding scenarios – such as image analysis, real-time signal processing or local AI algorithms – edge platforms with GPU acceleration are used, e.g. solutions based on NVIDIA Jetson. This type of edge computer combines low latency with the ability to run AI models directly at the data source.

They make it possible to run AI models directly at the machine or camera, without the need to send raw data to the cloud.

Higher up in the architecture are Elmatic workstations with NVIDIA RTX GPUs, used for data analysis, model testing and engineering and analytical work – also in enterprise environments, such as data science teams, IT security or video analysis. They form a bridge between the edge layer and the central infrastructure.

In enterprise and data center applications, the role of the central computing layer is played by NVIDIA MGX platforms, enabling the construction of a scalable AI infrastructure adapted to growing workloads.

An architecture designed in this way – from simple edge devices to extensive central infrastructure – makes it possible to build systems in which each layer performs exactly the tasks it was designed for.

How to make the decision – short selection criteria

If you answer "yes" to any of the questions below, local processing should be the first option to consider:

- does the system have to react in real time or near real time?

- are delays or interruptions in connectivity unacceptable?

- is the processed data sensitive or subject to additional regulations?

- are data transfer costs to the cloud growing faster than the value of the analysis?

In such cases, an edge-first architecture makes it possible to build a stable foundation that can, if necessary, be extended with a cloud layer.

How to explore the topic further and where to start

When planning the deployment of edge computing, a cloud-based system or a hybrid architecture, it is crucial to match the hardware and architecture to the real workload and process requirements. In practice, this very often means that local processing should be the first option to consider.

This is exactly what the ai.elmatic.net website is dedicated to – a knowledge center on accelerated computing, NVIDIA platforms and practical applications of AI and edge computing in industry and IT. There you will find descriptions of architectures, use-case examples and an overview of hardware platforms – from simple edge solutions to advanced data center infrastructure.

If you need support in hardware selection or want to verify the assumptions of your project, you can also take advantage of the consultations and ready configurations available in the Elmatic offer.